Remaining-data-free Machine Unlearning by Suppressing Sample Contribution

MU-Mis Algorithm

MU-Mis AlgorithmAbstract

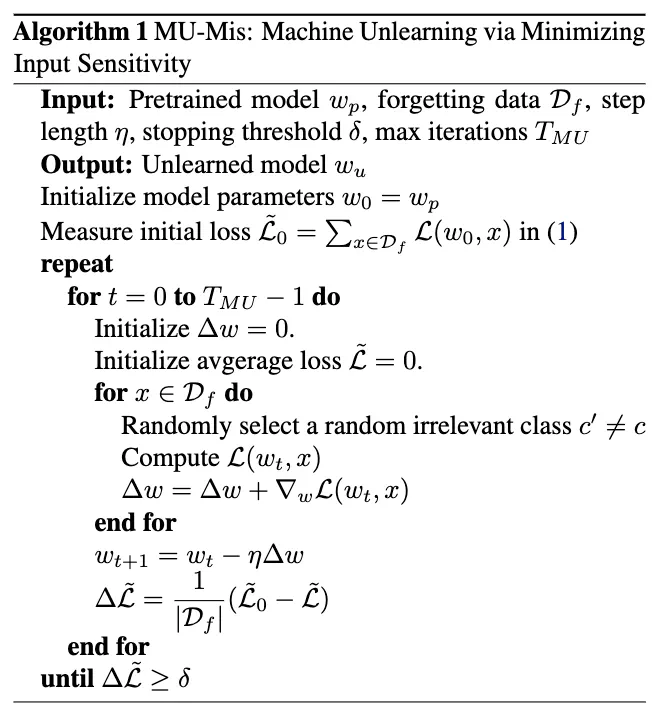

Machine unlearning (MU) is to forget data from a well-trained model, which is practically important due to the ``right to be forgotten’’. The unlearned model should approach the retrained model, where the forgetting data are not involved in the training process and hence do not contribute to the retrained model. Considering the forgetting data’s absence during retraining, we think unlearning should withdraw their contribution from the pre-trained model. The challenge is that when tracing the learning process is impractical, how to quantify and detach sample’s contribution to the dynamic learning process using only the pre-trained model. We first theoretically discover that sample’s contribution during the process will reflect in the learned model’s sensitivity to it. We then practically design a novel method, namely MU-Mis (Machine Unlearning by Minimizing input sensitivity), to suppress the contribution of the forgetting data. Experimental results demonstrate that MU-Mis can unlearn effectively and efficiently without utilizing the remaining data. It is the first time that a remaining-data-free method can outperform state-of-the-art (SoTA) unlearning methods that utilize the remaining data.

Type

Publication

In arXiv